VisualizingFutureConceptswithBodyInputandAI

We have all witnessed the hype surrounding the new AI tools that have taken the creative industry by storm over the past year. This disruptive technology has forced everyone in this profession to feel either empowered or threatened by the new workflows. For us, it was clear that we wanted to combine the technology with our experience in designing interactive installations. We are driven by the opportunity to provide users with something that allows them to immediately express their own creativity. Our goal was to define a collaborative workflow between the user and the AI, one that is guided but still leaves enough creative freedom. An intuitive interaction and the ability to use one's own body as a design tool were essential for our application.

Read the article also on behance.

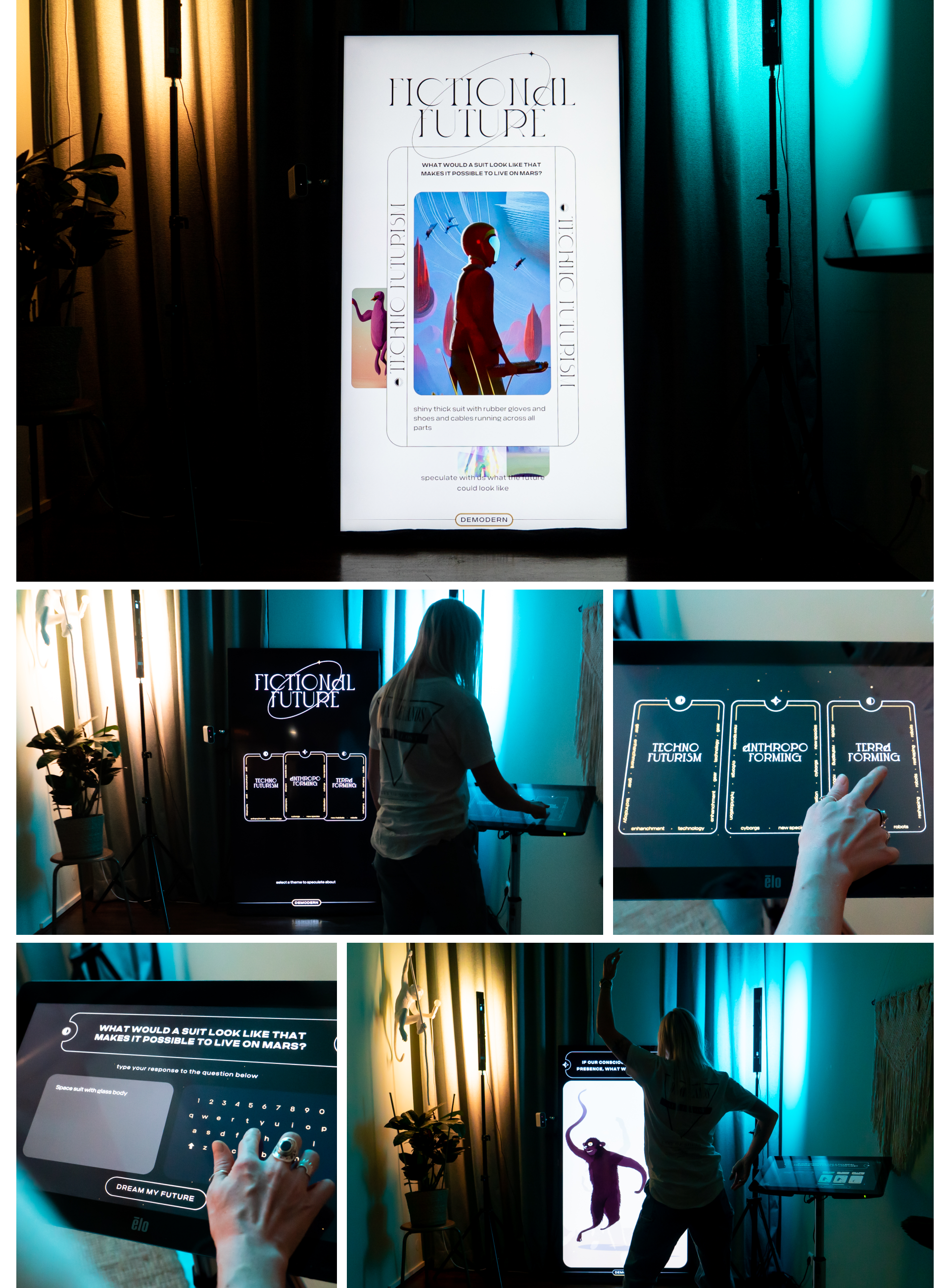

Future telling

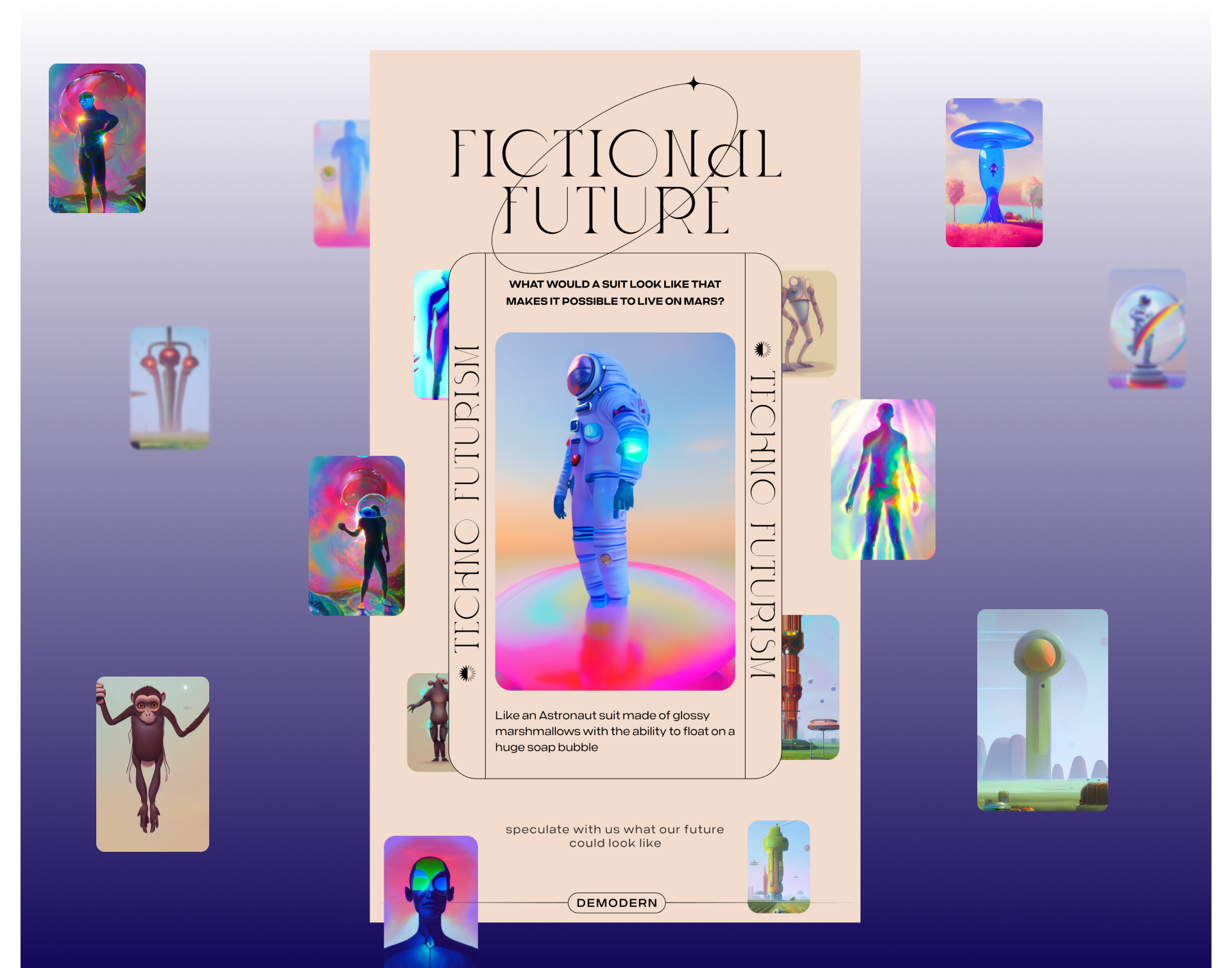

In UI and visual design, we wanted to reflect the favorable yet overly techno-optimistic view most people have on new technological developments. Some chase innovative ideas, hoping they will solve all existing problems. Therefore, the stylistic choice to integrate elements from tarot card reading seemed fitting. The decorative elements, inspired by Art Nouveau, also provided a strong analogy for the novel aesthetics of AI art.

Body-to-image generation

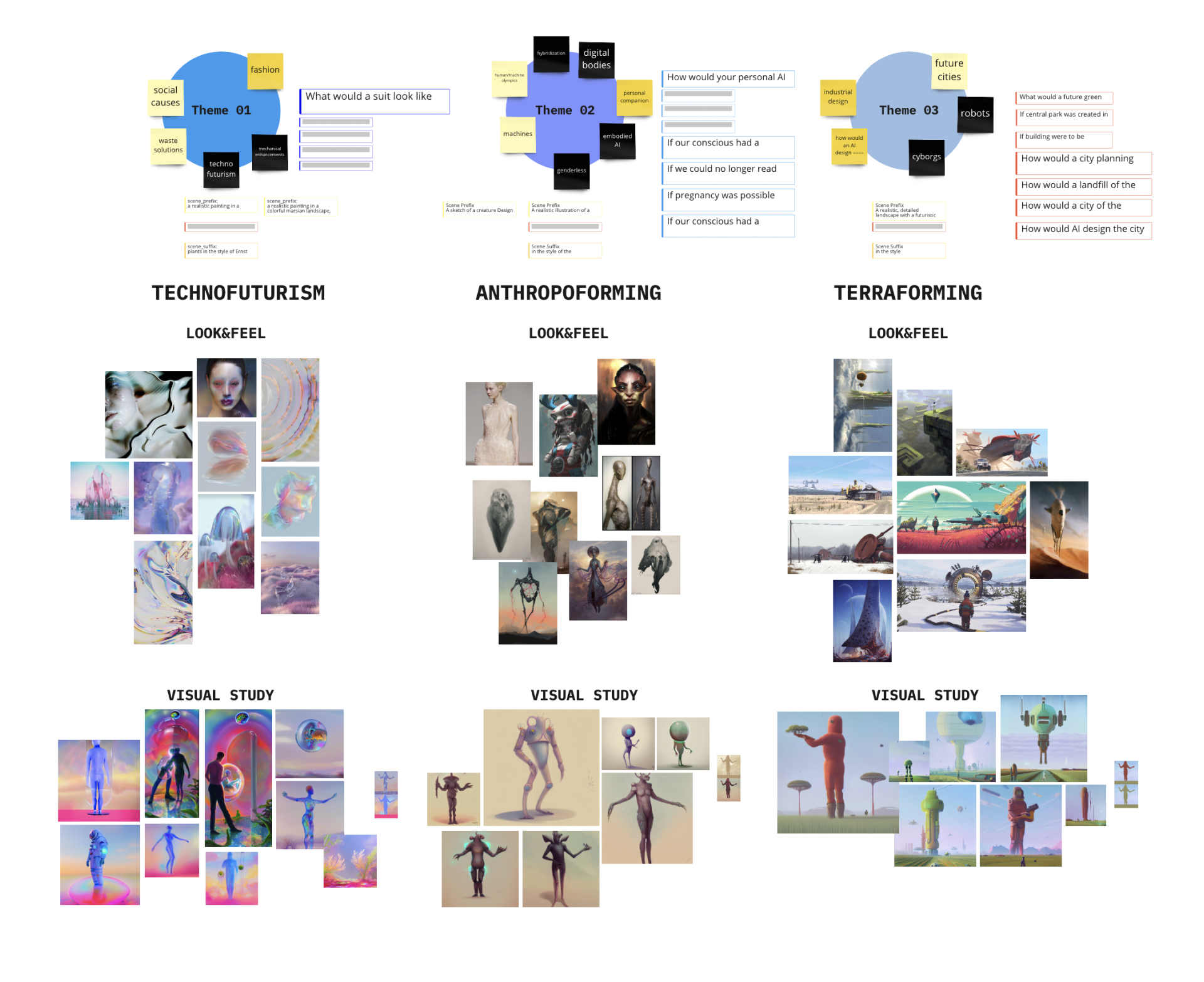

As a deep learning generator, we chose Stable Diffusion because it allowed us to have great control over the generation parameters. We then defined a conceptual and visual framework to guide the user and image generation to a cohesive result. The main task was to answer questions about an individual, speculative future product and input the answer via a touchpad. To optimize the user experience, we provided each visitor with three overarching themes. The user's response was then used as a text-to-image prompt for image generation. For each overarching theme, we developed an individual visual direction.

Controlling image distribution in a specific direction is the most time-consuming and challenging part of using deep learning tools. Besides thoughtful prompt design and a collection of well-functioning terms, it is important to be flexible regarding the outcome. The more accurately the presented concept is described, the more precise the image generation will be. It is recommended to work with overlapping concepts, as only a limited number of pixels can be used for generation. Too many conflicting ideas compete for limited space. Therefore, we developed a prompt pre- and suffix connected to the overarching theme. These prompts were not visible in the frontend and helped us create a cohesive look and feel for each theme by wrapping around the user-entered prompt. The mood boards created during the concept phase helped us find the most fitting terms.

In addition to text input, we also worked with input images for the foreground and background. This way, we created more variations for the noise suppression process during image distribution. The input images also allowed us to set color schemes for each theme.

Our most important input was the shape of the user's body, tracked via an infrared camera. This allowed the user to playfully influence the overall composition. The body acted as a mask, ultimately revealing the defined foreground image. In the user interface, users could see the shape of their body as a shadow silhouette. The influence was visible after a few seconds, showing the generated result. After experimenting with different text prompts in combination with their own body input, the three favored results could be selected and added to the collection of fictional future scenarios in idle mode.

Technical hurdles

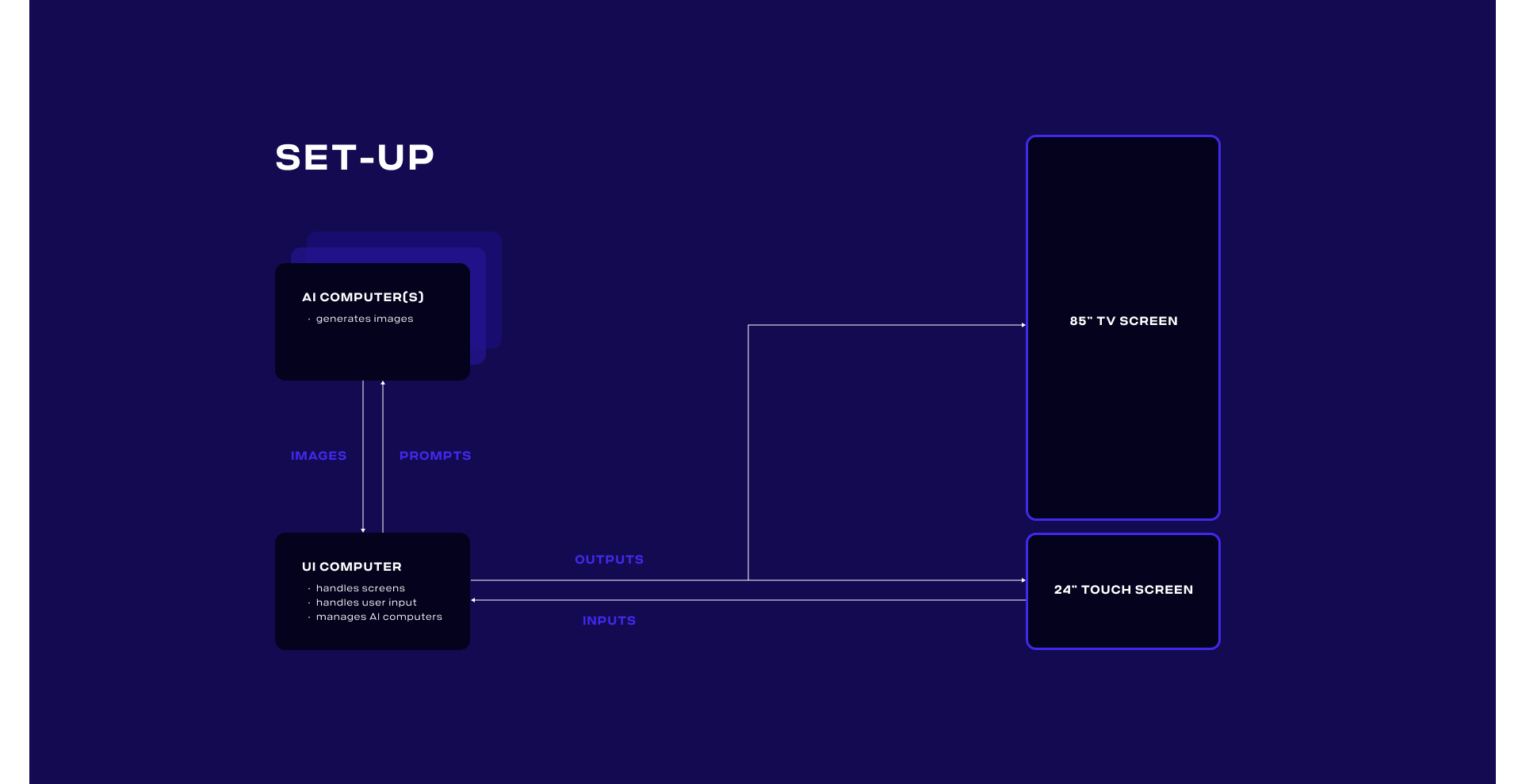

Direct feedback to the user on their inputs was a crucial aspect of the experience. Therefore, we split the image generation onto a dedicated high-end PC running a self-hosted Stable Diffusion installation with settings. This allowed us to render an image within 4-8 seconds. The user interacted with a separate client that could render the user interface and manage the render instructions. By adding more render PCs, we could have further increased the image refresh rate.

The final setup allowed users to create their own animation from their generated images. However, due to long render times and the lack of direct animation support in the selected Stable Diffusion model, this was impractical.

An idea with business potential

The Fictional-Future Experience was showcased as part of our Demodern showrooms. It generated so much interest that our client MINI decided to integrate the technology as part of the Sydney WorldPride campaign for the MINIverse, where users could generate their own LGBTQAI+ themed images from the MINIverse in their web browser or mobile device.